AutoMM for Text - Quick Start¶

MultiModalPredictor can solve problems where the data are either

image, text, numerical values, or categorical features. To get started,

we first demonstrate how to use it to solve problems that only contain

text. We pick two classical NLP problems for the purpose of

demonstration:

Here, we format the NLP datasets as data tables where the feature columns contain text fields and the label column contain numerical (regression) / categorical (classification) values. Each row in the table corresponds to one training sample.

%matplotlib inline

import numpy as np

import warnings

import matplotlib.pyplot as plt

warnings.filterwarnings('ignore')

np.random.seed(123)

Sentiment Analysis Task¶

First, we consider the Stanford Sentiment Treebank (SST) dataset, which consists of movie reviews and their associated sentiment. Given a new movie review, the goal is to predict the sentiment reflected in the text (in this case a binary classification, where reviews are labeled as 1 if they convey a positive opinion and labeled as 0 otherwise). Let’s first load and look at the data, noting the labels are stored in a column called label.

from autogluon.core.utils.loaders import load_pd

train_data = load_pd.load('https://autogluon-text.s3-accelerate.amazonaws.com/glue/sst/train.parquet')

test_data = load_pd.load('https://autogluon-text.s3-accelerate.amazonaws.com/glue/sst/dev.parquet')

subsample_size = 1000 # subsample data for faster demo, try setting this to larger values

train_data = train_data.sample(n=subsample_size, random_state=0)

train_data.head(10)

| sentence | label | |

|---|---|---|

| 43787 | very pleasing at its best moments | 1 |

| 16159 | , american chai is enough to make you put away... | 0 |

| 59015 | too much like an infomercial for ram dass 's l... | 0 |

| 5108 | a stirring visual sequence | 1 |

| 67052 | cool visual backmasking | 1 |

| 35938 | hard ground | 0 |

| 49879 | the striking , quietly vulnerable personality ... | 1 |

| 51591 | pan nalin 's exposition is beautiful and myste... | 1 |

| 56780 | wonderfully loopy | 1 |

| 28518 | most beautiful , evocative | 1 |

Above the data happen to be stored in the

Parquet format,

but you can also directly load() data from a

CSV file or

other equivalent formats. While here we load files from AWS S3 cloud

storage,

these could instead be local files on your machine. After loading,

train_data is simply a Pandas

DataFrame,

where each row represents a different training example.

Training¶

To ensure this tutorial runs quickly, we simply call fit() with a

subset of 1000 training examples and limit its runtime to approximately

1 minute. To achieve reasonable performance in your applications, you

are recommended to set much longer time_limit (eg. 1 hour), or do

not specify time_limit at all (time_limit=None).

from autogluon.multimodal import MultiModalPredictor

import uuid

model_path = f"./tmp/{uuid.uuid4().hex}-automm_sst"

predictor = MultiModalPredictor(label='label', eval_metric='acc', path=model_path)

predictor.fit(train_data, time_limit=180)

The cache for model files in Transformers v4.22.0 has been updated. Migrating your old cache. This is a one-time only operation. You can interrupt this and resume the migration later on by calling transformers.utils.move_cache().

Moving 0 files to the new cache system

0it [00:00, ?it/s]

Global seed set to 123

Downloading /home/ci/autogluon/multimodal/src/autogluon/multimodal/data/templates.zip from https://automl-mm-bench.s3.amazonaws.com/few_shot/templates.zip...

Auto select gpus: [0] Using 16bit native Automatic Mixed Precision (AMP) GPU available: True (cuda), used: True TPU available: False, using: 0 TPU cores IPU available: False, using: 0 IPUs HPU available: False, using: 0 HPUs LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0] | Name | Type | Params ------------------------------------------------------------------- 0 | model | HFAutoModelForTextPrediction | 108 M 1 | validation_metric | Accuracy | 0 2 | loss_func | CrossEntropyLoss | 0 ------------------------------------------------------------------- 108 M Trainable params 0 Non-trainable params 108 M Total params 217.786 Total estimated model params size (MB) Epoch 0, global step 3: 'val_acc' reached 0.59500 (best 0.59500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=0-step=3.ckpt' as top 3 /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:33: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.get_filesystem has been deprecated in v1.8.0 and will be removed in v1.10.0. Please use lightning_lite.utilities.cloud_io.get_filesystem instead. rank_zero_deprecation( /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:25: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.atomic_save has been deprecated in v1.8.0 and will be removed in v1.10.0. This function is internal but you can copy over its implementation. rank_zero_deprecation( Epoch 0, global step 7: 'val_acc' reached 0.68000 (best 0.68000), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=0-step=7.ckpt' as top 3 Epoch 1, global step 10: 'val_acc' reached 0.65500 (best 0.68000), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=1-step=10.ckpt' as top 3 Epoch 1, global step 14: 'val_acc' reached 0.84500 (best 0.84500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=1-step=14.ckpt' as top 3 Epoch 2, global step 17: 'val_acc' reached 0.87000 (best 0.87000), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=2-step=17.ckpt' as top 3 Epoch 2, global step 21: 'val_acc' reached 0.92500 (best 0.92500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=2-step=21.ckpt' as top 3 Epoch 3, global step 24: 'val_acc' reached 0.90500 (best 0.92500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=3-step=24.ckpt' as top 3 Epoch 3, global step 28: 'val_acc' reached 0.93500 (best 0.93500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=3-step=28.ckpt' as top 3 Epoch 4, global step 31: 'val_acc' reached 0.93500 (best 0.93500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=4-step=31.ckpt' as top 3 Epoch 4, global step 35: 'val_acc' reached 0.93000 (best 0.93500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=4-step=35.ckpt' as top 3 Epoch 5, global step 38: 'val_acc' was not in top 3 Epoch 5, global step 42: 'val_acc' reached 0.93500 (best 0.93500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/132ce79f9c604c61b9c5ae1713d14240-automm_sst/epoch=5-step=42.ckpt' as top 3 Epoch 6, global step 45: 'val_acc' was not in top 3 Epoch 6, global step 49: 'val_acc' was not in top 3 Epoch 7, global step 52: 'val_acc' was not in top 3 Time limit reached. Elapsed time is 0:03:03. Signaling Trainer to stop. Epoch 7, global step 52: 'val_acc' was not in top 3 Global seed set to 123 Global seed set to 123 Global seed set to 123

<autogluon.multimodal.predictor.MultiModalPredictor at 0x7f4a352c1850>

Above we specify that: the column named label contains the label values to predict, AutoGluon should optimize its predictions for the accuracy evaluation metric, trained models should be saved in the automm_sst folder, and training should run for around 60 seconds.

Evaluation¶

After training, we can easily evaluate our predictor on separate test data formatted similarly to our training data.

test_score = predictor.evaluate(test_data)

print(test_score)

Global seed set to 123

{'acc': 0.893348623853211}

By default, evaluate() will report the evaluation metric previously

specified, which is accuracy in our example. You may also specify

additional metrics, e.g. F1 score, when calling evaluate.

test_score = predictor.evaluate(test_data, metrics=['acc', 'f1'])

print(test_score)

Global seed set to 123

{'acc': 0.893348623853211, 'f1': 0.8963210702341138}

Prediction¶

And you can easily obtain predictions from these models by calling

predictor.predict().

sentence1 = "it's a charming and often affecting journey."

sentence2 = "It's slow, very, very, very slow."

predictions = predictor.predict({'sentence': [sentence1, sentence2]})

print('"Sentence":', sentence1, '"Predicted Sentiment":', predictions[0])

print('"Sentence":', sentence2, '"Predicted Sentiment":', predictions[1])

Global seed set to 123

"Sentence": it's a charming and often affecting journey. "Predicted Sentiment": 1

"Sentence": It's slow, very, very, very slow. "Predicted Sentiment": 0

For classification tasks, you can ask for predicted class-probabilities instead of predicted classes.

probs = predictor.predict_proba({'sentence': [sentence1, sentence2]})

print('"Sentence":', sentence1, '"Predicted Class-Probabilities":', probs[0])

print('"Sentence":', sentence2, '"Predicted Class-Probabilities":', probs[1])

Global seed set to 123

"Sentence": it's a charming and often affecting journey. "Predicted Class-Probabilities": [8.263575e-04 9.991735e-01]

"Sentence": It's slow, very, very, very slow. "Predicted Class-Probabilities": [0.9961792 0.00382077]

We can just as easily produce predictions over an entire dataset.

test_predictions = predictor.predict(test_data)

test_predictions.head()

Global seed set to 123

0 1

1 1

2 1

3 1

4 0

Name: label, dtype: int64

Save and Load¶

The trained predictor is automatically saved at the end of fit(),

and you can easily reload it.

Warning

MultiModalPredictor.load() used pickle module implicitly,

which is known to be insecure. It is possible to construct malicious

pickle data which will execute arbitrary code during unpickling.

Never load data that could have come from an untrusted source, or

that could have been tampered with. Only load data you trust.

loaded_predictor = MultiModalPredictor.load(model_path)

loaded_predictor.predict_proba({'sentence': [sentence1, sentence2]})

Global seed set to 123

array([[8.263575e-04, 9.991735e-01],

[9.961792e-01, 3.820765e-03]], dtype=float32)

You can also save the predictor to any location by calling .save().

new_model_path = f"./tmp/{uuid.uuid4().hex}-automm_sst"

loaded_predictor.save(new_model_path)

loaded_predictor2 = MultiModalPredictor.load(new_model_path)

loaded_predictor2.predict_proba({'sentence': [sentence1, sentence2]})

Global seed set to 123

array([[8.263575e-04, 9.991735e-01],

[9.961792e-01, 3.820765e-03]], dtype=float32)

Extract Embeddings¶

You can also use a trained predictor to extract embeddings that maps each row of the data table to an embedding vector extracted from intermediate neural network representations of the row.

embeddings = predictor.extract_embedding(test_data)

print(embeddings.shape)

Global seed set to 123

(872, 768)

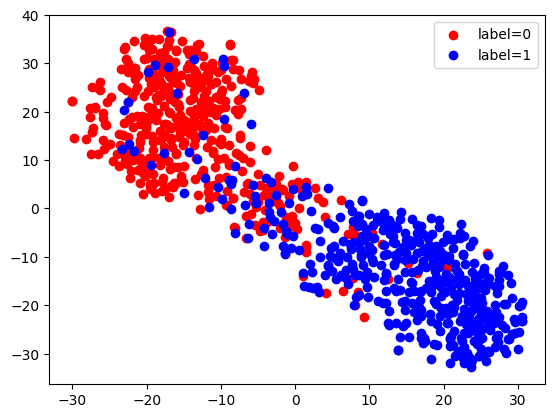

Here, we use TSNE to visualize these extracted embeddings. We can see that there are two clusters corresponding to our two labels, since this network has been trained to discriminate between these labels.

from sklearn.manifold import TSNE

X_embedded = TSNE(n_components=2, random_state=123).fit_transform(embeddings)

for val, color in [(0, 'red'), (1, 'blue')]:

idx = (test_data['label'].to_numpy() == val).nonzero()

plt.scatter(X_embedded[idx, 0], X_embedded[idx, 1], c=color, label=f'label={val}')

plt.legend(loc='best')

<matplotlib.legend.Legend at 0x7f49200de610>

Continuous Training¶

You can also load a predictor and call .fit() again to continue

training the same predictor with new data.

new_predictor = MultiModalPredictor.load(new_model_path)

new_predictor.fit(train_data, time_limit=30)

test_score = new_predictor.evaluate(test_data, metrics=['acc', 'f1'])

print(test_score)

Global seed set to 123 A new predictor save path is created.This is to prevent you to overwrite previous predictor saved here.You could check current save path at predictor._save_path.If you still want to use this path, set resume=True Auto select gpus: [0] Using 16bit native Automatic Mixed Precision (AMP) GPU available: True (cuda), used: True TPU available: False, using: 0 TPU cores IPU available: False, using: 0 IPUs HPU available: False, using: 0 HPUs LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0] | Name | Type | Params ------------------------------------------------------------------- 0 | model | HFAutoModelForTextPrediction | 108 M 1 | validation_metric | Accuracy | 0 2 | loss_func | CrossEntropyLoss | 0 ------------------------------------------------------------------- 108 M Trainable params 0 Non-trainable params 108 M Total params 217.786 Total estimated model params size (MB) Epoch 0, global step 3: 'val_acc' reached 0.94500 (best 0.94500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/AutogluonModels/ag-20230204_010023/epoch=0-step=3.ckpt' as top 3 /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:33: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.get_filesystem has been deprecated in v1.8.0 and will be removed in v1.10.0. Please use lightning_lite.utilities.cloud_io.get_filesystem instead. rank_zero_deprecation( /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:25: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.atomic_save has been deprecated in v1.8.0 and will be removed in v1.10.0. This function is internal but you can copy over its implementation. rank_zero_deprecation( Epoch 0, global step 7: 'val_acc' reached 0.95500 (best 0.95500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/AutogluonModels/ag-20230204_010023/epoch=0-step=7.ckpt' as top 3 Epoch 1, global step 10: 'val_acc' reached 0.95000 (best 0.95500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/AutogluonModels/ag-20230204_010023/epoch=1-step=10.ckpt' as top 3 Time limit reached. Elapsed time is 0:00:30. Signaling Trainer to stop. Epoch 1, global step 12: 'val_acc' reached 0.96500 (best 0.96500), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/AutogluonModels/ag-20230204_010023/epoch=1-step=12.ckpt' as top 3 Global seed set to 123 Global seed set to 123 Global seed set to 123 Global seed set to 123

{'acc': 0.893348623853211, 'f1': 0.9001074113856069}

Sentence Similarity Task¶

Next, let’s use MultiModalPredictor to train a model for evaluating how semantically similar two sentences are. We use the Semantic Textual Similarity Benchmark dataset for illustration.

sts_train_data = load_pd.load('https://autogluon-text.s3-accelerate.amazonaws.com/glue/sts/train.parquet')[['sentence1', 'sentence2', 'score']]

sts_test_data = load_pd.load('https://autogluon-text.s3-accelerate.amazonaws.com/glue/sts/dev.parquet')[['sentence1', 'sentence2', 'score']]

sts_train_data.head(10)

| sentence1 | sentence2 | score | |

|---|---|---|---|

| 0 | A plane is taking off. | An air plane is taking off. | 5.00 |

| 1 | A man is playing a large flute. | A man is playing a flute. | 3.80 |

| 2 | A man is spreading shreded cheese on a pizza. | A man is spreading shredded cheese on an uncoo... | 3.80 |

| 3 | Three men are playing chess. | Two men are playing chess. | 2.60 |

| 4 | A man is playing the cello. | A man seated is playing the cello. | 4.25 |

| 5 | Some men are fighting. | Two men are fighting. | 4.25 |

| 6 | A man is smoking. | A man is skating. | 0.50 |

| 7 | The man is playing the piano. | The man is playing the guitar. | 1.60 |

| 8 | A man is playing on a guitar and singing. | A woman is playing an acoustic guitar and sing... | 2.20 |

| 9 | A person is throwing a cat on to the ceiling. | A person throws a cat on the ceiling. | 5.00 |

In this data, the column named score contains numerical values (which we’d like to predict) that are human-annotated similarity scores for each given pair of sentences.

print('Min score=', min(sts_train_data['score']), ', Max score=', max(sts_train_data['score']))

Min score= 0.0 , Max score= 5.0

Let’s train a regression model to predict these scores. Note that we

only need to specify the label column and AutoGluon automatically

determines the type of prediction problem and an appropriate loss

function. Once again, you should increase the short time_limit below

to obtain reasonable performance in your own applications.

sts_model_path = f"./tmp/{uuid.uuid4().hex}-automm_sts"

predictor_sts = MultiModalPredictor(label='score', path=sts_model_path)

predictor_sts.fit(sts_train_data, time_limit=60)

Global seed set to 123 Auto select gpus: [0] Using 16bit native Automatic Mixed Precision (AMP) GPU available: True (cuda), used: True TPU available: False, using: 0 TPU cores IPU available: False, using: 0 IPUs HPU available: False, using: 0 HPUs LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0] | Name | Type | Params ------------------------------------------------------------------- 0 | model | HFAutoModelForTextPrediction | 108 M 1 | validation_metric | MeanSquaredError | 0 2 | loss_func | MSELoss | 0 ------------------------------------------------------------------- 108 M Trainable params 0 Non-trainable params 108 M Total params 217.785 Total estimated model params size (MB) Epoch 0, global step 20: 'val_rmse' reached 0.60486 (best 0.60486), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/a4cc333a6c5d4cbfbcd533fb0beee5e7-automm_sts/epoch=0-step=20.ckpt' as top 3 /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:33: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.get_filesystem has been deprecated in v1.8.0 and will be removed in v1.10.0. Please use lightning_lite.utilities.cloud_io.get_filesystem instead. rank_zero_deprecation( /home/ci/opt/venv/lib/python3.8/site-packages/pytorch_lightning/utilities/cloud_io.py:25: LightningDeprecationWarning: pytorch_lightning.utilities.cloud_io.atomic_save has been deprecated in v1.8.0 and will be removed in v1.10.0. This function is internal but you can copy over its implementation. rank_zero_deprecation( Epoch 0, global step 40: 'val_rmse' reached 0.50467 (best 0.50467), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/a4cc333a6c5d4cbfbcd533fb0beee5e7-automm_sts/epoch=0-step=40.ckpt' as top 3 Time limit reached. Elapsed time is 0:01:00. Signaling Trainer to stop. Epoch 1, global step 58: 'val_rmse' reached 0.45782 (best 0.45782), saving model to '/home/ci/autogluon/docs/_build/eval/tutorials/multimodal/text_prediction/tmp/a4cc333a6c5d4cbfbcd533fb0beee5e7-automm_sts/epoch=1-step=58.ckpt' as top 3 Global seed set to 123 Global seed set to 123 Global seed set to 123

<autogluon.multimodal.predictor.MultiModalPredictor at 0x7f49202cf130>

We again evaluate our trained model’s performance on separate test data. Below we choose to compute the following metrics: RMSE, Pearson Correlation, and Spearman Correlation.

test_score = predictor_sts.evaluate(sts_test_data, metrics=['rmse', 'pearsonr', 'spearmanr'])

print('RMSE = {:.2f}'.format(test_score['rmse']))

print('PEARSONR = {:.4f}'.format(test_score['pearsonr']))

print('SPEARMANR = {:.4f}'.format(test_score['spearmanr']))

Global seed set to 123

RMSE = 0.71

PEARSONR = 0.8892

SPEARMANR = 0.8916

Let’s use our model to predict the similarity score between a few sentences.

sentences = ['The child is riding a horse.',

'The young boy is riding a horse.',

'The young man is riding a horse.',

'The young man is riding a bicycle.']

score1 = predictor_sts.predict({'sentence1': [sentences[0]],

'sentence2': [sentences[1]]}, as_pandas=False)

score2 = predictor_sts.predict({'sentence1': [sentences[0]],

'sentence2': [sentences[2]]}, as_pandas=False)

score3 = predictor_sts.predict({'sentence1': [sentences[0]],

'sentence2': [sentences[3]]}, as_pandas=False)

print(score1, score2, score3)

Global seed set to 123

Global seed set to 123

Global seed set to 123

4.4920683 3.5774941 1.0452672

Although the MultiModalPredictor currently supports classification

and regression tasks, it can directly be used for many NLP tasks if you

properly format them into a data table. Note that there can be many text

columns in this data table. Refer to the MultiModalPredictor

documentation

to see all available methods/options.

Unlike TabularPredictor which trains/ensembles different types of

models, MultiModalPredictor focuses on selecting and finetuning deep

learning based models. Internally, it integrates with

timm ,

huggingface/transformers,

openai/clip as the model zoo.

Other Examples¶

You may go to AutoMM Examples to explore other examples about AutoMM.

Customization¶

To learn how to customize AutoMM, please refer to Customize AutoMM.